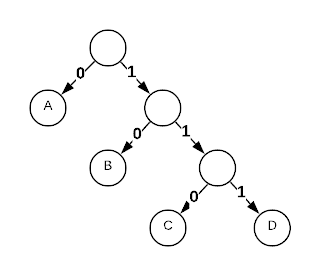

This is common in uncompressed bitmap files. This happens when a few letters in the alphabet are much more prevalent then others a skewed distribution as described in the NIST quote above. These situations often respond well to a form of blocking called run-length encoding The worst case for Huffman coding can happen when the probability of a symbol exceeds $2^ = 0.5$, making the upper limit of inefficiency unbounded. Note that is not the fact that a single character is prevalent that's the issue it is the distribution of the frequencies that's the problem.Ī more useful description comes from your wikipedia quote: See example 3.1.1.Īs I understand it the inefficiency gets worse as the alphabet increases which is why wikipedia talks about unbounded inefficiency. For this and other relations see Alex Vinokur's note on Fibonacci numbers, Lucas numbers and Huffman codes.Īccording to Alex Vinokur a sample Huffman encoding of a sample Fibonacci distribution was 2.3846 times longer than the original. The worst case for Huffman coding (or, equivalently, the longest Huffman coding for a set of characters) is when the distribution of frequencies follows the Fibonacci numbers. All I want is a worst case, I don't presume it's subjective? P.S: I don't think this question is subjective, I know that there is a specific answer to it. Maybe I am overthinking, but a little help would be great. Well anyway I just want to know in which type of files or text data, our Huffman coding would get bad compression. But from what I understand, in Huffman coding, the more a character appears, the more better it is for us to get compression right? So if a character appears more in our input text, would it ensure good or bad compression? According to wiki, if the probability of symbol exceeds 0.5 (meaning that if a character appears more in our input text), it would be produce bad compression. The worst case for Huffman coding can happen when the probability of a symbol exceeds 2^(−1) = 0.5, making the upper limit of inefficiency unbounded. Since it mainly revolves around the frequencies of the characters present in the input text, I believe the answer is also going to be related to that. I am doing a project on Huffman coding and wanted to know when it wouldn't be ideal to use or rather when would the Huffman coding produce low compression.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed